Online Communities & Subculture (DP IB Theory of Knowledge): Revision Note

Online communities & subculture

Online communities are groups of people who interact mainly through internet platforms, e.g.:

forums

social media

group chats

Subcultures are groups with shared values, interests and practices that feel distinct from “mainstream” culture; online spaces can help them form, grow and spread

Rapid knowledge creation

Online communities can create and spread knowledge quickly by pooling many people’s observations, experiences and skills

Speed can be helpful for practical problem-solving because ideas can be discussed and improved in real time

E.g. a hobby forum may collaboratively troubleshoot a common issue and can refine the fix as more users test it

Speed can reduce reliability if claims are shared before being checked

Errors can also spread quickly if they are shared widely before being corrected

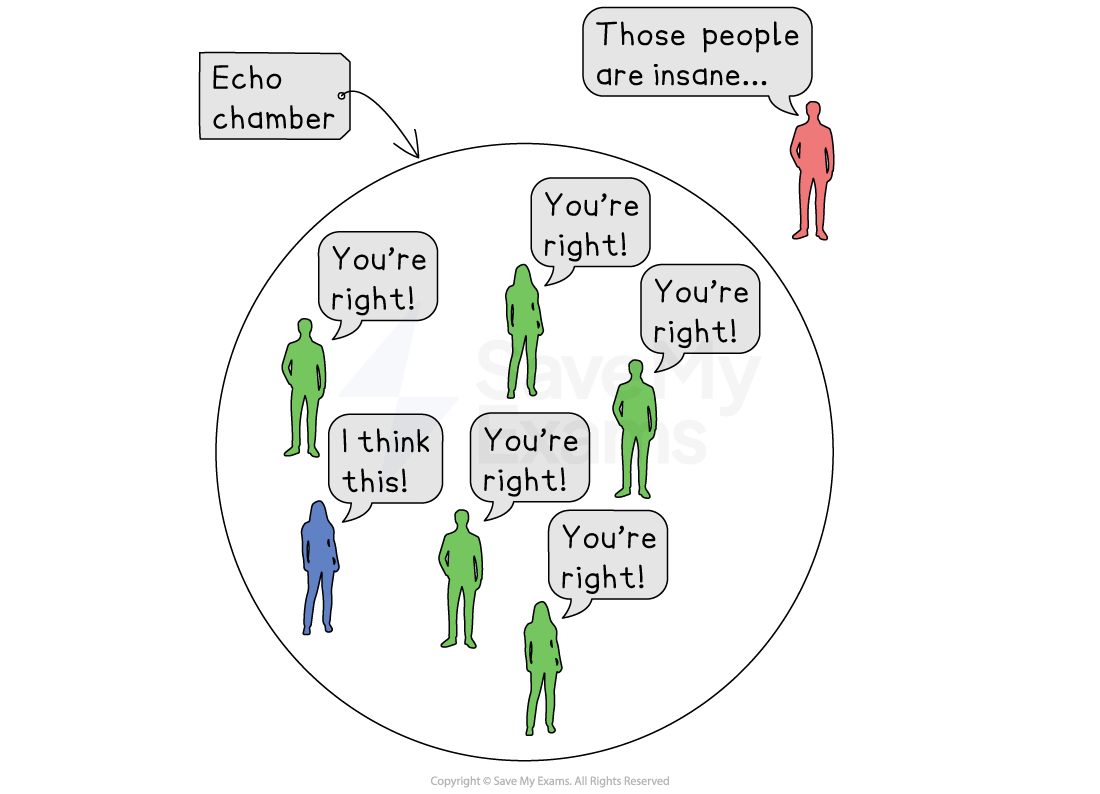

Echo chambers and group identity

Echo chambers form when people mostly see and share views that match their existing beliefs

This occurs through self-selection and platform algorithms

Ideas may be repeated frequently within an echo chamber, increasing a knower’s confidence in their accuracy

Familiar claims feel more believable even without strong evidence

Echo chambers can narrow what is treated as relevant evidence because members are more likely to dismiss outside sources as untrustworthy

E.g. in an online anti-vaccination group, members may reject a national public health agency’s safety data by calling it “government propaganda”, and may only accept links from the group’s preferred websites or influencers

Echo chambers can result in the development of group identity, i.e. a shared sense of “who we are” as a group

Group identity is based on common beliefs, values, language and in-jokes that shape members’ belonging and distinguish the group from outsiders

Moderation and misinformation

Moderation is the process of managing posts and users in order to shape content, e.g.:

rules

removal of content

warnings to non-conforming members

banning members who don’t respond to warnings

Moderation can shape knowledge by deciding which sources are considered to be acceptable and which are seen as biased or unfair

Moderation can limit misinformation by removing false claims, labelling disputed content and prioritising reliable sources

E.g. a platform may add warnings to false health claims and provide links to verified public health guidance

Weak moderation can allow misinformation to spread, while heavy moderation can reduce open discussion

Resistance to outside critique

Online groups can show resistance to outside criticism due to distrust of outsiders, e.g. mainstream media, institutions or experts

When criticism threatens group identity, members may dismiss strong evidence to protect belonging and status within the group

Resistance can protect a community from prejudice or bad-faith criticism, but it can also block useful correction and reduce knowledge accuracy within the community

Unlock more, it's free!

Was this revision note helpful?